The Mazefall experiment

Mazefall started as a roguelike with Pac-Man DNA: star-chomping, coin-collecting, fast movement, and a loop that gets surprisingly mean once the maze decides you’ve had enough joy for one run.

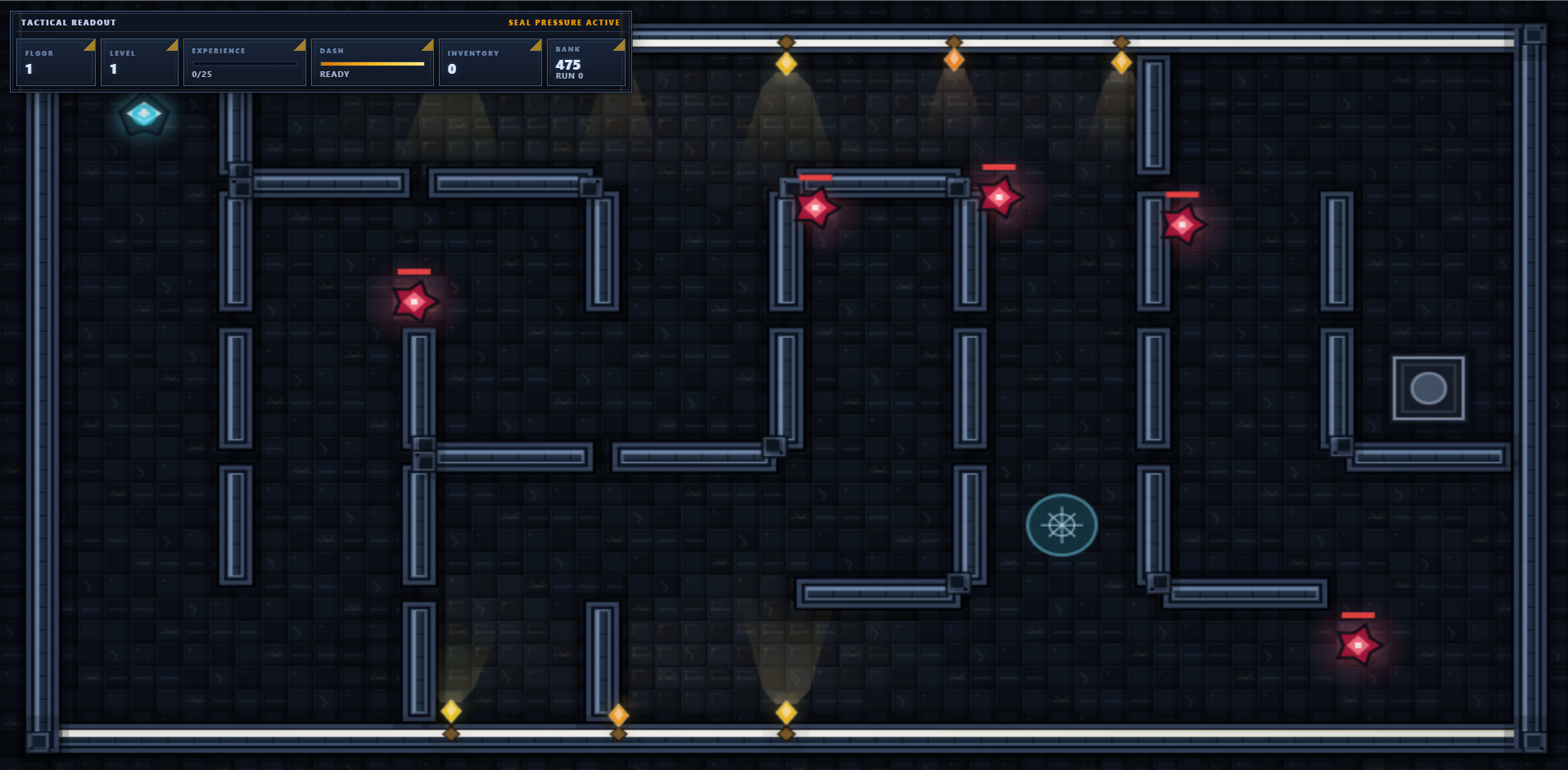

This pass focused on one problem: the game played well, but the art needed to stop looking like a placeholder that had escaped from a prototype dungeon.

The goal was not “make it prettier somehow.” The goal was a repeatable process for giving an agent a coherent pixel-art taste map, then turning that into production-ready game assets.

1. Define the art aesthetic first

The first move was building a visual identity anchor from classic game references. In plain English: use games with taste as a mood board, then force the agent to explain that taste back.

An OpenClaw agent researched the aesthetic details of specific games Eric enjoys, then ran repeated style tests to compare what it thought it understood against the actual source material. Each gap became the next research target. That loop repeated until the style map stopped being vague and started being useful.

The working theory is pretty sharp: an agent already loaded with strong quality-engineering instincts can use those instincts to spot visual-cohesion gaps too. It’s product thinking pointed at art direction.

2. Build a specialized Skill.md instead of begging a general model

Generic image models are decent until you ask them for retro pixel art, at which point they often cough up artifact soup and call it style. That was not going to cut it.

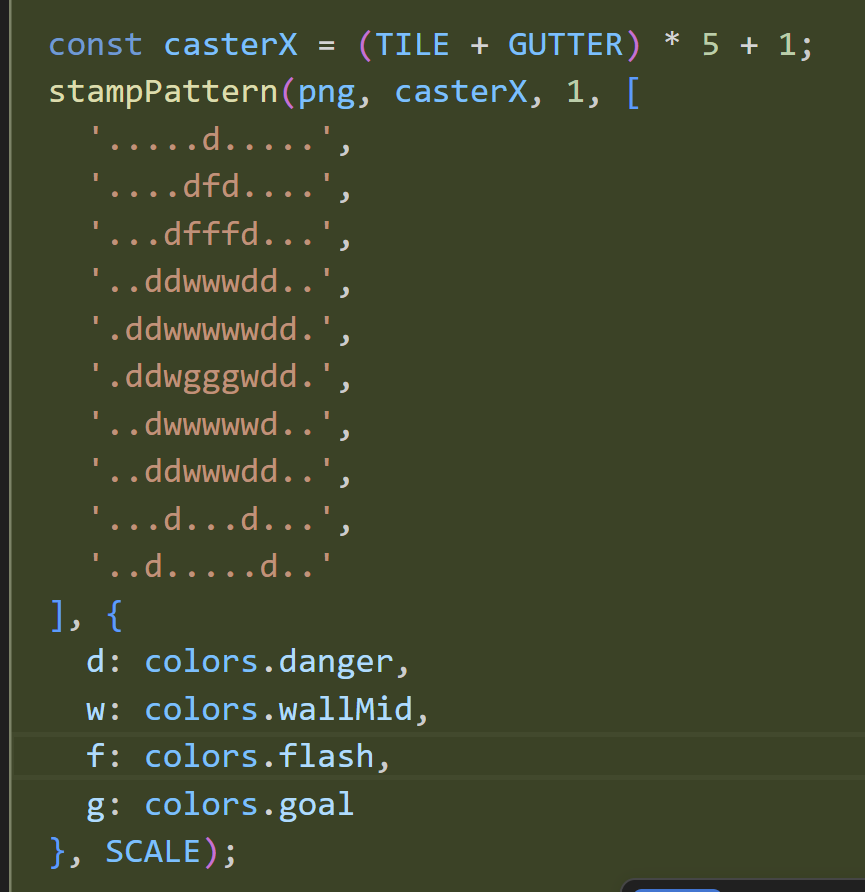

The breakthrough was letting the model use text tokens as the sprite-art substrate itself. Not token names pointing to PNGs on disk. The actual ASCII patterns becoming the sprite logic.

The idea is almost annoyingly elegant: let the model stay in its native medium—tokens—then wrap a sprite system around those tokens.

That meant the agent could reason about sprite construction in a form it already understands well, then produce canonical examples directly inside the Skill.md used for Mazefall.

3. Put the skill where the app lives

The first two placement attempts were textbook wrong. Dropping the skill into a generic .agents folder felt “industry standard” and still sucked. Moving it into the instance-wide skills library was better, but it invited the exact cross-project bleed Eric had warned about elsewhere.

The third attempt worked because it stopped being abstract: the skill moved into Mazefall itself. Local skill, local project, local context. Cleaner routing. Less noise. Better behavior.

Attempt 1

Generic editor convention. Convenient. Wrong.

Attempt 2

Shared instance skill library. Better, but context leakage lurked.

Attempt 3

App-local skill inside Mazefall. Finally the right fit.

4. Apply the skill in passes, not one heroic leap

The art upgrade followed the sane pattern: ask the agent to handle the work in phases, review each pass, then shave the next layer. Same principle as whittling wood—thin cuts, not theatrical hacking.

The early pass already showed meaningful improvement, but it still had that familiar agentic engineering smell: almost there. Useful, promising, not finished.

5. The illumination dilemma

Strictly speaking, the experiment did not need a lighting solution. Naturally that made it irresistible.

A wall sconce became the gateway drug. Early lighting attempts were rough, then a tutorial on pixel-art light and shadow gave the agent enough structure to stop flailing and start understanding.

Credit where it’s due: this light-and-shadow article from Slynyrd provided the conceptual scaffolding. After some back-and-forth, the revised lighting system started looking properly alive.

6. One more thing: animated sprites and camera effects

Once the static art pass held together, the obvious next bad influence arrived: animation. The agent built out a sprite animation system and camera effects on top of the custom engine.

That’s part of the point here. The workflow is repeatable. The agent needs good questions, good constraints, and a clear taste target—not divine inspiration descending from the GPU heavens.

What this taught

- Token-native art direction matters. If your art system fits the model’s native medium, procedural output gets much stronger.

- App-local skills are worth it. They reduce routing noise, keep context tighter, and stop irrelevant style bleed.

- Owning a slice of the product compounds. Agents get sharper when they repeatedly handle the same kind of work inside the same product boundary.

Try the current build of MazeFall at mazefall.ericrhea.com. If you want the full original write-up, screenshots, and framing, the Substack version is here: Agentic AI Skills – Pixel Art Skill.md.