The basic idea

The harness was not trying to be a perfect player. That would have been the wrong kind of clever. It was a glorified Roomba: find a path, murder what matters, avoid obvious stupidity, descend the stairs, and keep doing that until it reached floor 2.

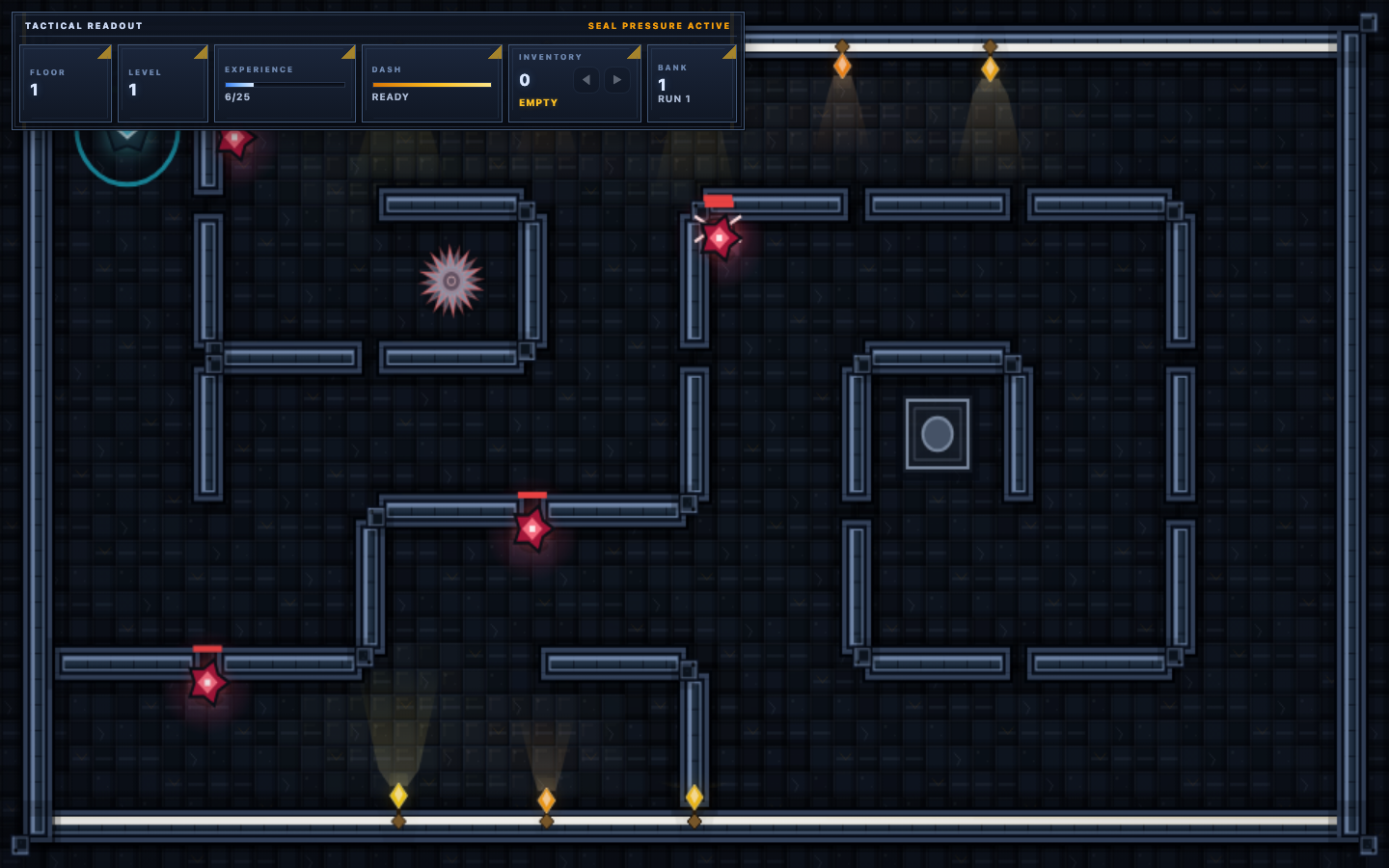

That sounds crude because it is crude. Crude was the point. A game like Mazefall does not need a philosopher-king to prove that the run loop works. It needs a relentless idiot with just enough spatial awareness to stop headbutting walls.

The win condition was narrow and useful: launch the real game, survive the floor, clear the lock, descend, and emit proof. Not “play beautifully.” Not “solve the whole game.” Just prove that the core loop still holds under pressure.

What the harness actually did

The live bot loaded the real browser game with Playwright, imported the live runtime state, then drove the player through direct control logic inside the page. It watched floor number, kill requirements, enemies, hazards, stairs, and the player’s health, then made tiny decisions every 50 milliseconds.

nearest enemy → move or kite → avoid hazards → auto-pick draft →

stairs unlocked? path to stairs → tap descend → stop at floor 2Under the hood, the useful parts were surprisingly pragmatic:

- Grid-aware pathing: it converted world space into room cells, used a breadth-first search over neighboring cells, and rejected segments that would clip walls or live breakables.

- Hazard repulsion: it computed a small “get the hell away from that” vector whenever spikes or other hazards got too close.

- Combat bands: when sharing a cell with an enemy, it changed behavior by distance—close retreat, mid-range strafe, longer-range pursuit.

- Health-aware cowardice: low health made it back off harder instead of cosplaying bravery.

- Autonomous progress: it auto-selected upgrades, auto-descended stairs, and logged milestones like

[BOT] reached floor 2. - Proof capture: it recorded video and a final screenshot so success was not just a cheerful lie in a terminal.

Why it was so effective

1. It tested outcomes, not implementation trivia

The harness did not care how elegant the code was. It cared whether the game still worked as a game. That is the right level for catching regressions that unit tests politely step around.

2. It ran against the real runtime

This was not a toy model of Mazefall. It loaded the actual browser build, touched the real state, used the real room geometry, and dealt with the real timing and rendering environment.

3. It was just smart enough

Most harnesses fail by being too dumb to finish or too ambitious to stabilize. This one sat in the sweet spot: BFS pathing, collision-aware movement, and a few combat heuristics. Enough to clear the floor. Not enough to become a research project.

4. It converted vibe checks into repeatable evidence

Human playtesting is excellent at noticing feel and terrible at consistency. The Roomba bot gave a repeatable baseline: can a competent stubborn machine still survive, clear, and descend?

5. It exposed system interactions

Mazefall’s bugs were often not isolated defects. They lived in the interplay between hazards, pathing, lock states, combat feedback, mobile UI, and progression. A live bot stirs those systems together until the weak seam rips.

6. It was cheap to rerun

That matters more than people admit. A slightly ugly harness you will run ten times is worth more than a cathedral of test architecture nobody wants to touch.

How it helped “solve” Mazefall

“Solved” here does not mean the bot beat the whole game and ascended as dungeon royalty. It means it became a reliable proof mechanism for the core loop. Once Mazefall had a bot that could repeatedly reach floor 2, whole classes of uncertainty became testable instead of theatrical.

- If stairs stopped unlocking properly, the run stalled.

- If pathing or collision got weird, the bot jammed itself into geometry like a cursed vacuum cleaner.

- If hazards became unfair or invisible, survival cratered.

- If upgrade flow or restart state broke, the bot exposed it fast.

- If a visual-only change actually damaged readability, combat outcomes told on it.

The important shift was epistemic: Mazefall stopped being “I think the run loop still feels okay” and started being “the harness can still clear and descend on the live build.” That is a much less stupid sentence.

Can we recreate it?

Yes. Absolutely. This kind of harness is reproducible because it is built from boring parts, and boring parts are glorious.

Part 1: Launch the real app

Use Playwright or an equivalent browser runner. Start the real game page, not a mocked scene, and wait until the live state is stable enough to touch.

Part 2: Import or expose state cleanly

The harness needs to observe enough truth to make decisions: player position, room geometry, enemies, hazards, stairs, health, and progression state.

Part 3: Pick one useful goal

Do not start with “beat everything.” Start with a milestone that means the loop works: clear one floor, survive ninety seconds, complete a tutorial, kill a boss phase.

Part 4: Give it minimal tactics

BFS or A* pathing, hazard avoidance, one target-selection rule, one retreat rule, one upgrade-selection rule. Keep it legible enough that you can debug it without summoning a priest.

Part 5: Capture proof

Record video, log milestones, save a screenshot, and fail loudly when the milestone is missed. If there is no artifact, somebody will eventually claim success from pure optimism.

The current Mazefall proof bot is specific to this game, but the pattern is portable. In fact, portability is the whole seduction.

Broader implications

The obvious implication is game testing. The less obvious one is that this style of harness is a strong pattern for agent-built software in general.

- For games: build narrow outcome-driven bots to catch regressions in movement, readability, interaction timing, and progression.

- For web apps: create task bots that complete a real user goal instead of merely checking whether buttons still exist.

- For agent systems: use scenario harnesses that validate “can the system actually finish the job” instead of over-indexing on component correctness.

- For product iteration: harnesses make it safer to tune visuals, pacing, economy, and friction because you preserve at least one hard baseline while everything else shifts.

There is also a cultural implication here. Once teams see a little specialized Roomba bot produce better truth than a stack of hand-wavy status updates, they stop treating proof as optional garnish. They start asking for evidence by default. Good. They should.

Limits and warnings

This kind of harness can absolutely overfit. A bot that reliably reaches floor 2 can still miss late-run balance failures, edge-case soft locks, and all the subtle human “this feels awful” problems that only a person notices.

So the right posture is not “replace human playtesting.” The right posture is “stop making humans do the dumbest part alone.” Let the bot cover the repeatable baseline. Let humans judge taste, surprise, tension, and delight.

In other words: the Roomba harness is not a design oracle. It is a brutally useful continuity check. That is enough to matter.

The real lesson

The harness worked because it did not try to be universal. It was pointed. It knew the live game, the immediate objective, and the minimum tactics required to stop failing stupidly.

That is the broader pattern I trust most right now: not giant abstract evaluation frameworks, but small vicious proofs tied to real outcomes. Build the little machine that can embarrass your assumptions. Then rerun it every time you touch something important.